Once we had approval to move forward, my PM partner Erick Montez joined the project. Erick and I would collaborate on call review and adjacent projects for the next year and a half. Jake Rowe and Brittany Choy also joined — supporting research and taking on significant design work. I led UX and collaborated with them regularly to keep the design vision aligned.

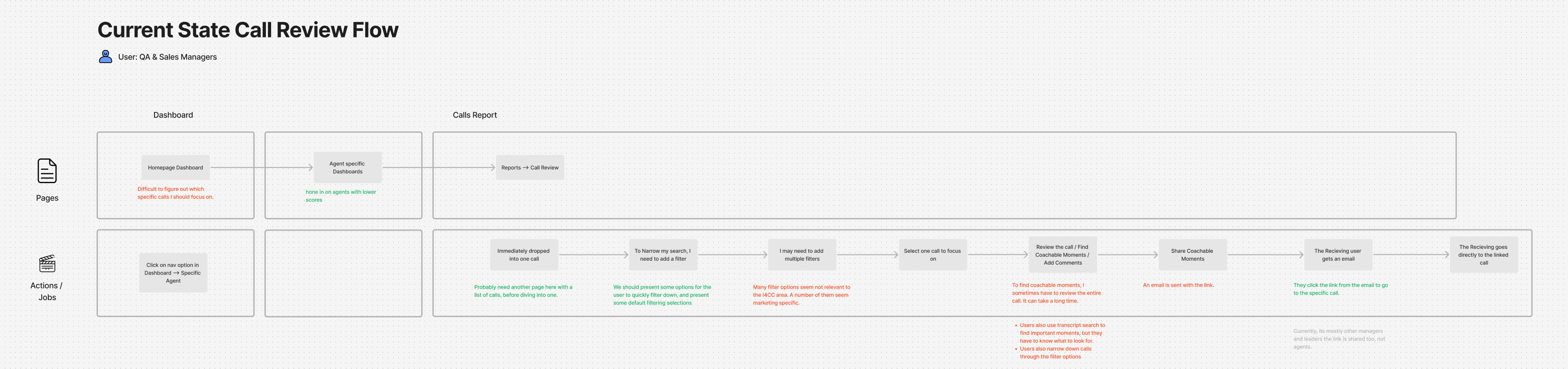

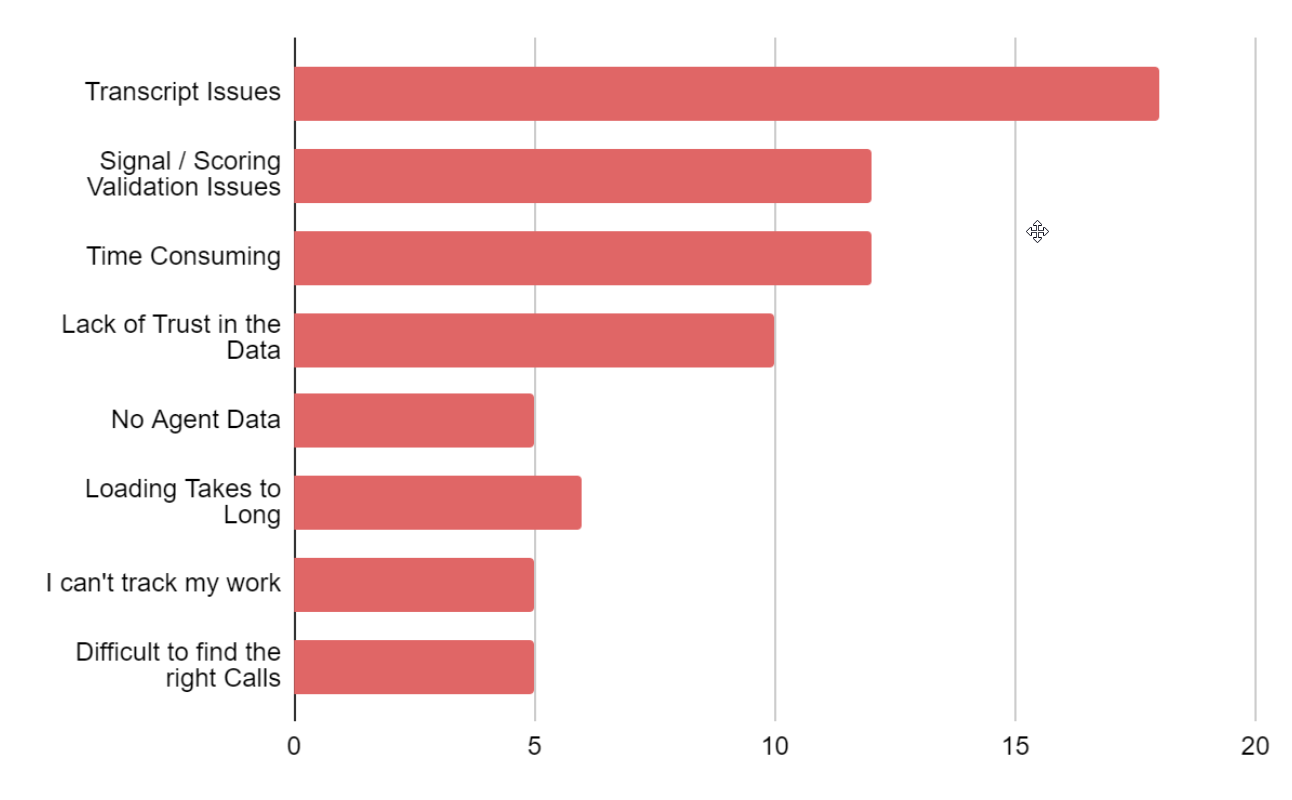

We planned a 3–4 week effort: FullStory session analysis, direct customer interviews, competitive design research, internal stakeholder conversations, and a heuristic analysis. We interviewed five customers ranging from 9-agent teams to 100+ agent organizations — eight users total, all focused specifically on their call review workflow and pain points.

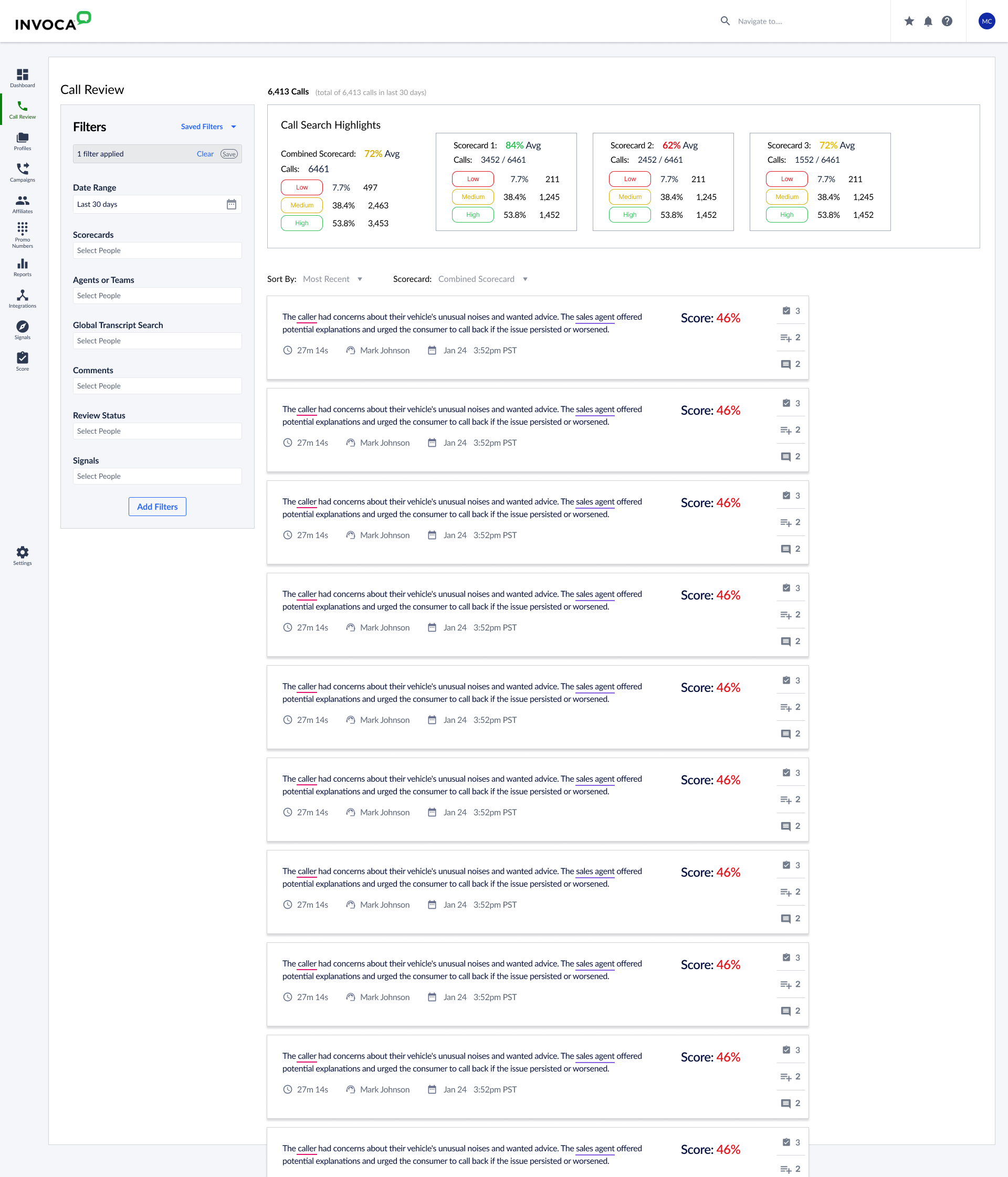

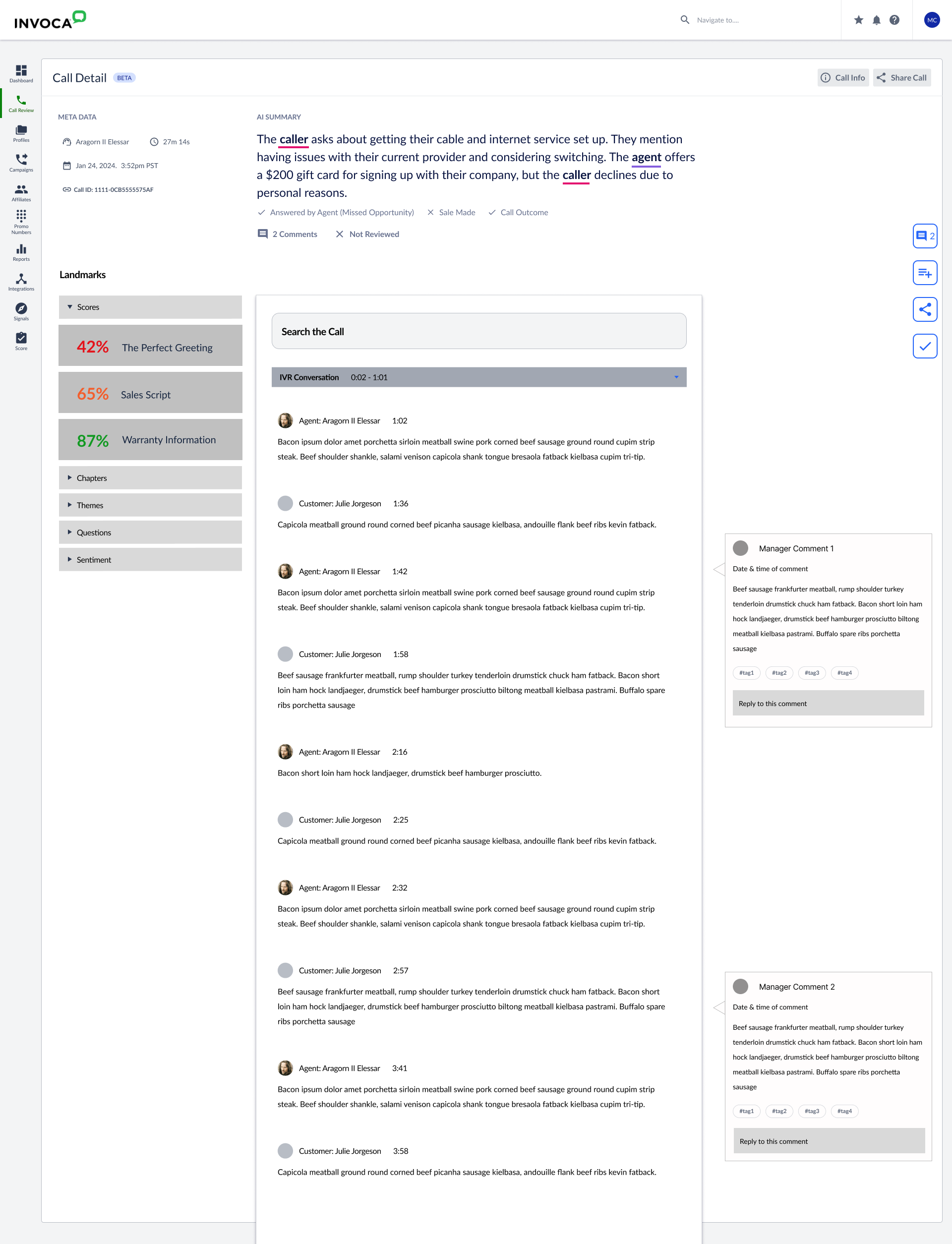

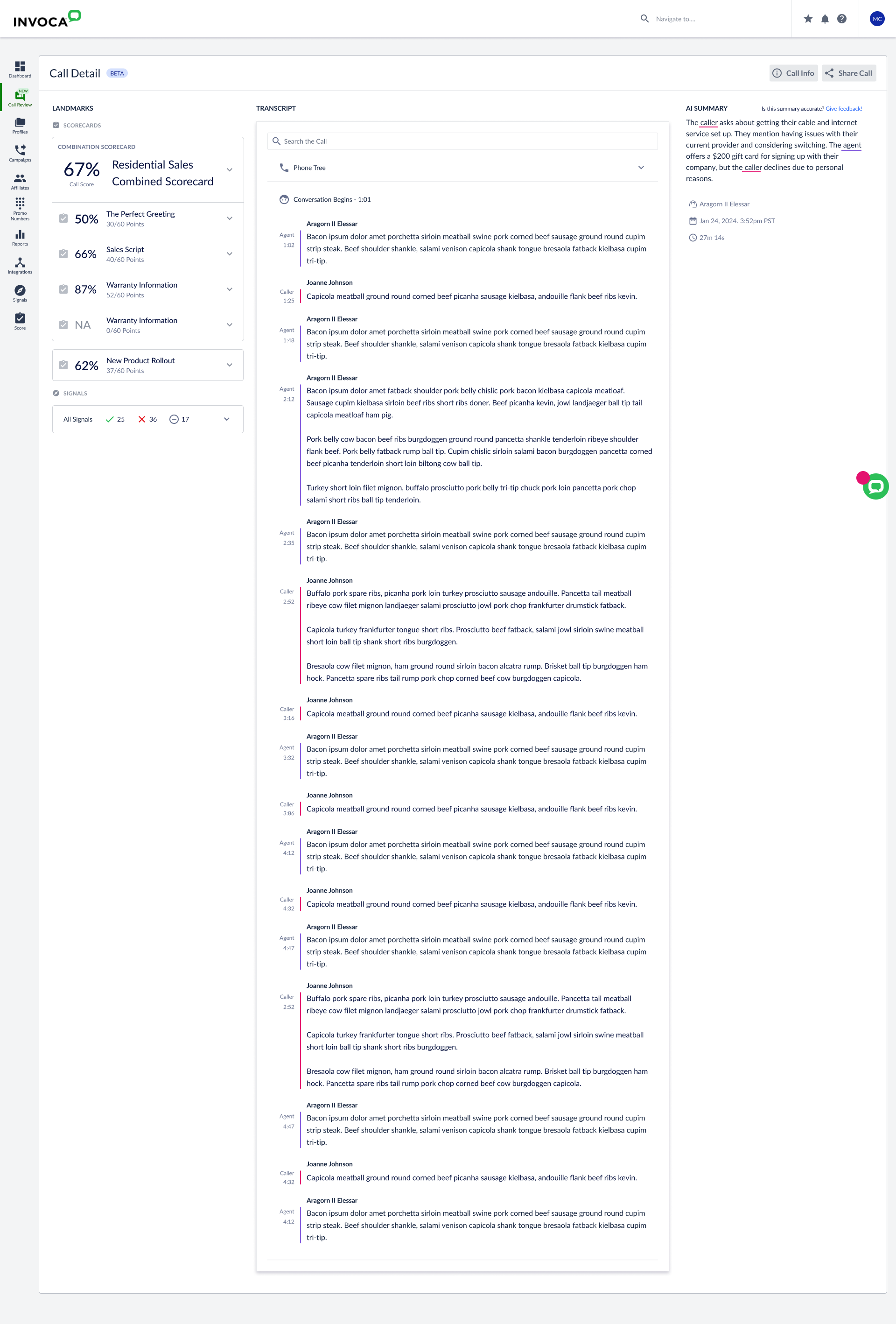

Here's what they were doing: contact center QA analysts and sales managers were manually reviewing calls, hunting for coaching opportunities for their agents — looking for bad calls that needed intervention, good calls worth replicating, and anomalies that might signal a larger trend. The coaching was the point. Better call handling meant higher conversion rates. And the pressure was real — some QA reviewers had weekly call review quotas tied directly to their compensation and bonuses. This wasn't optional busywork.

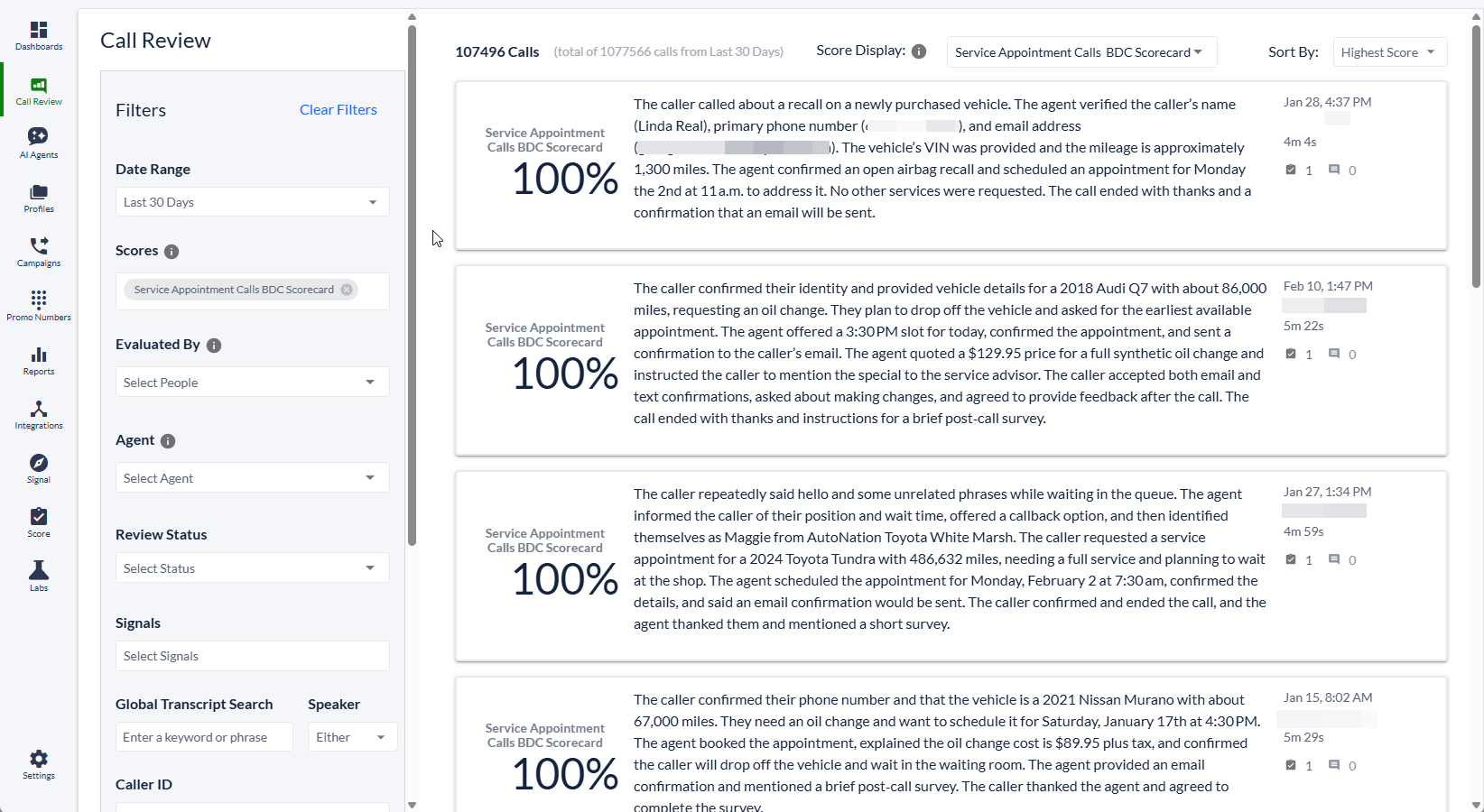

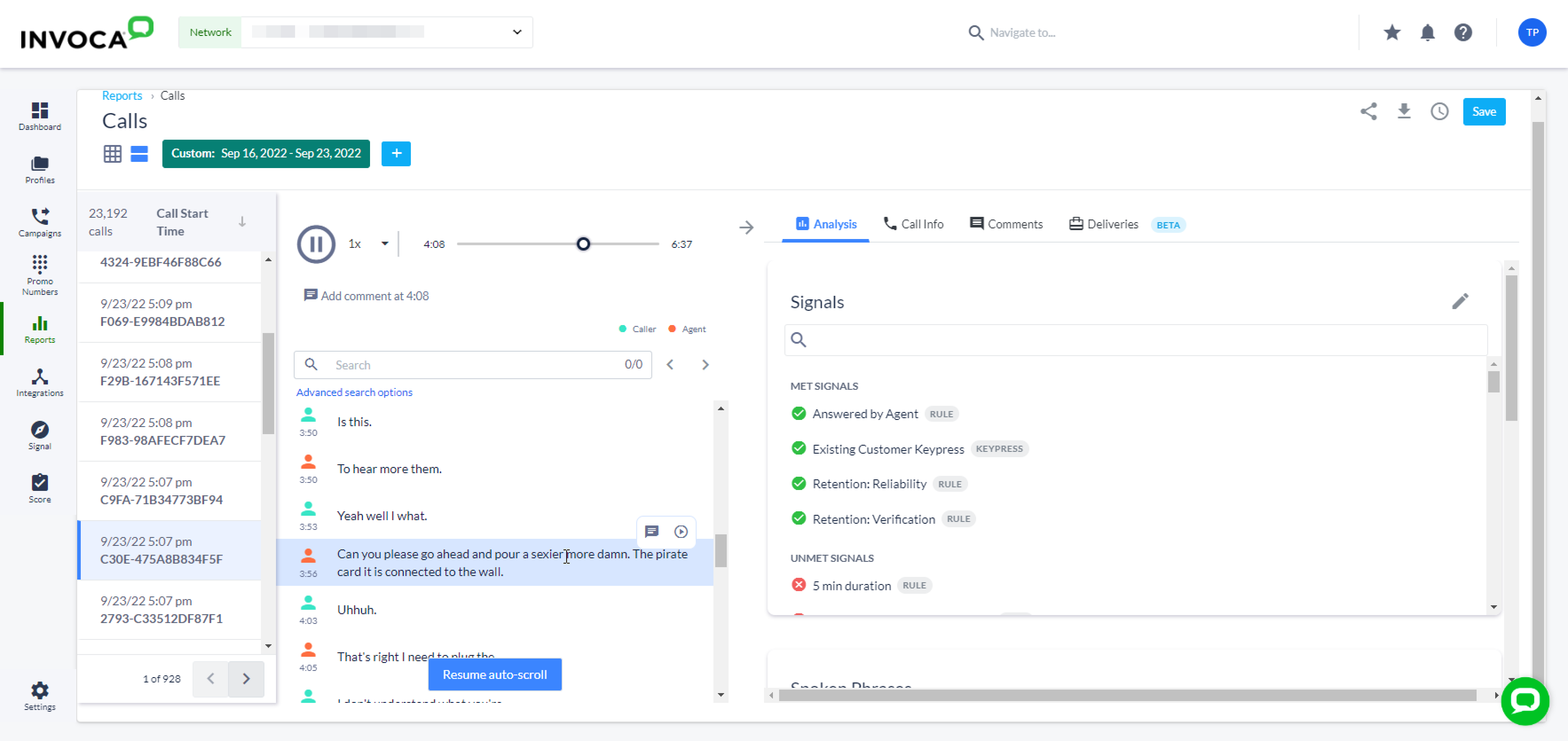

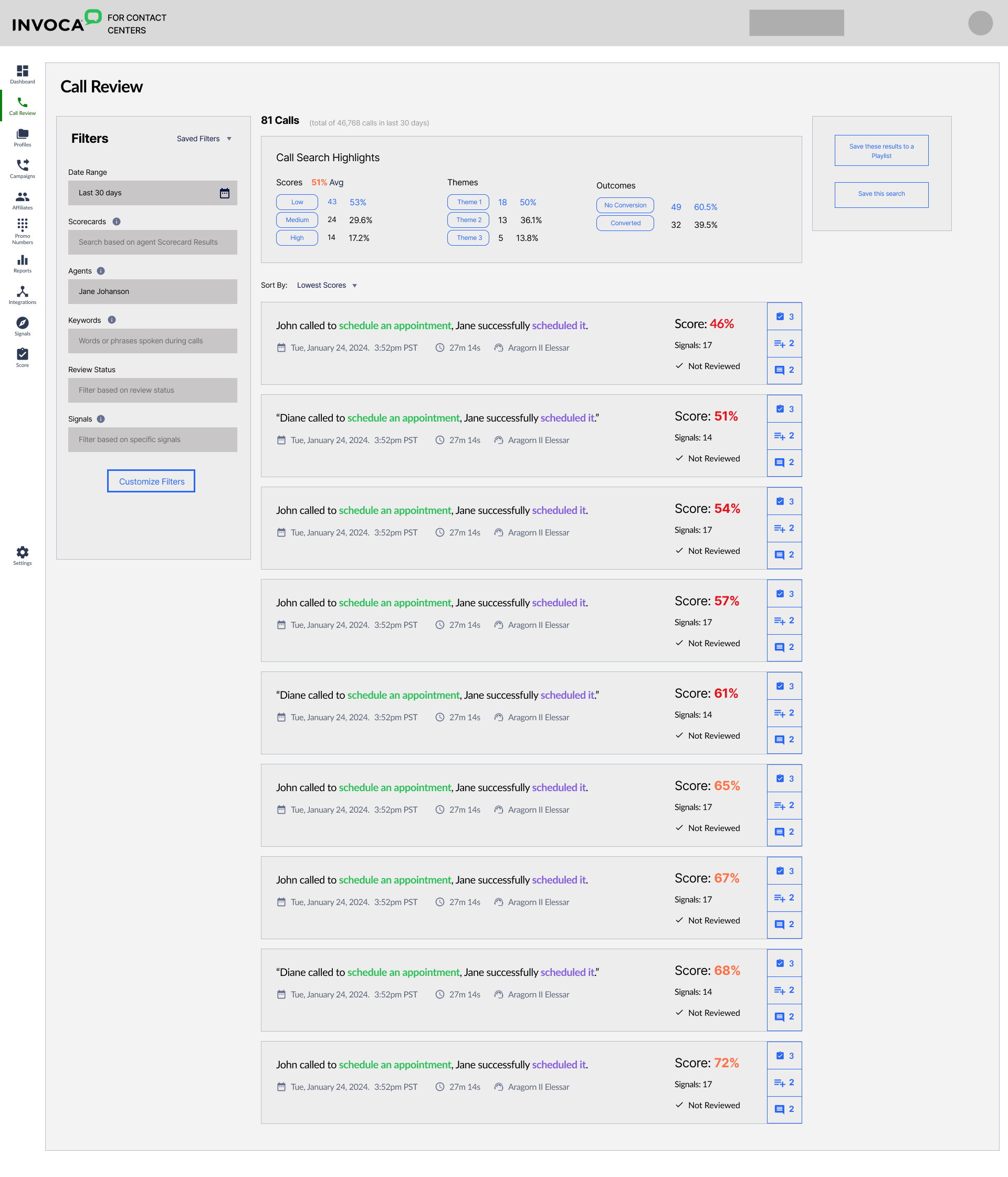

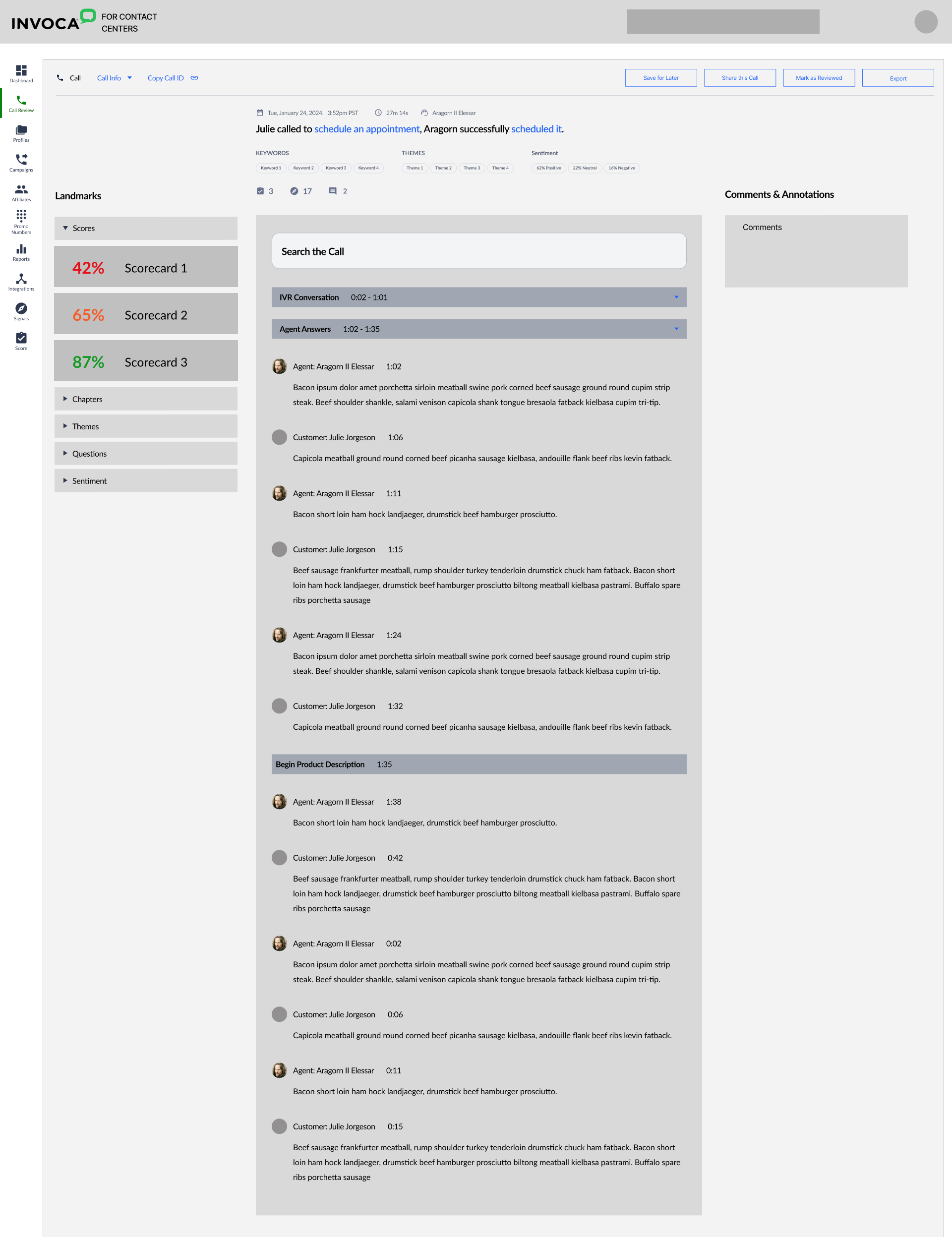

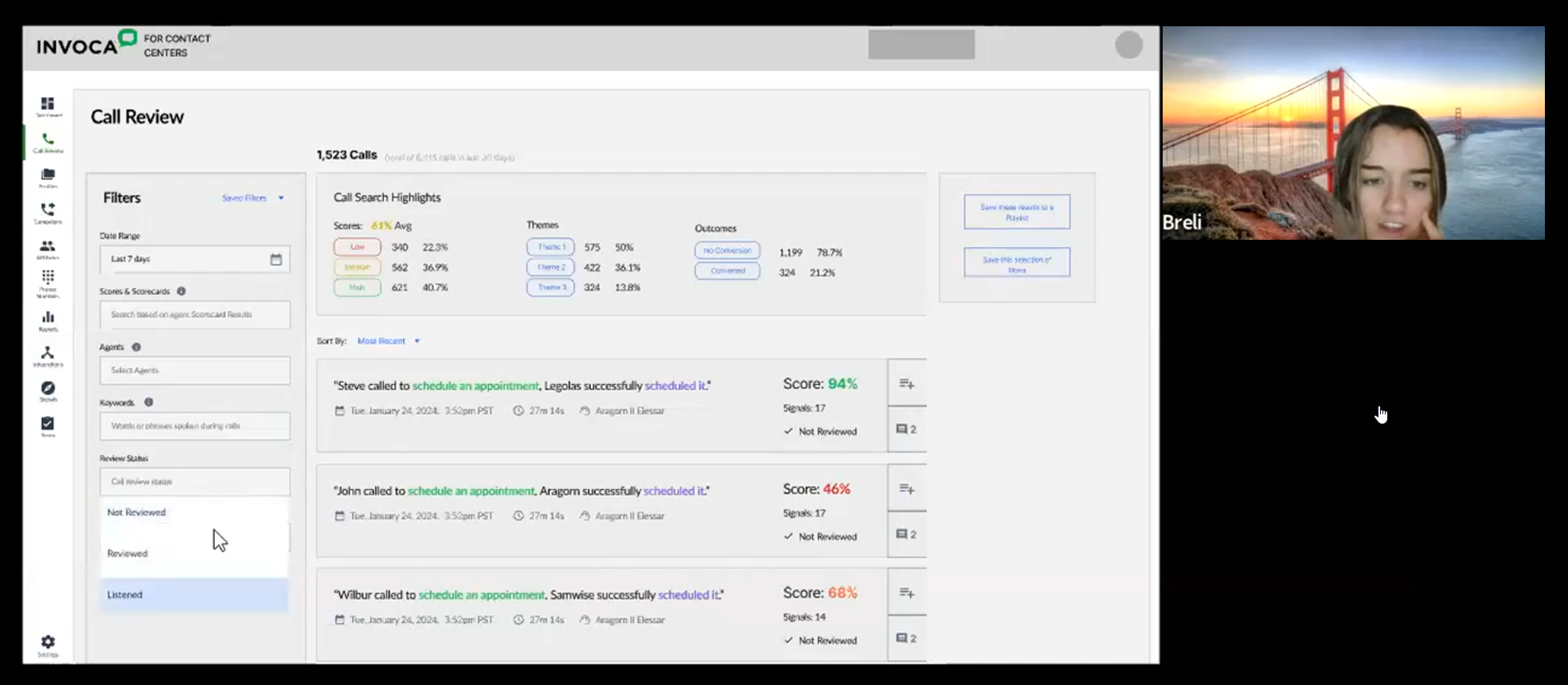

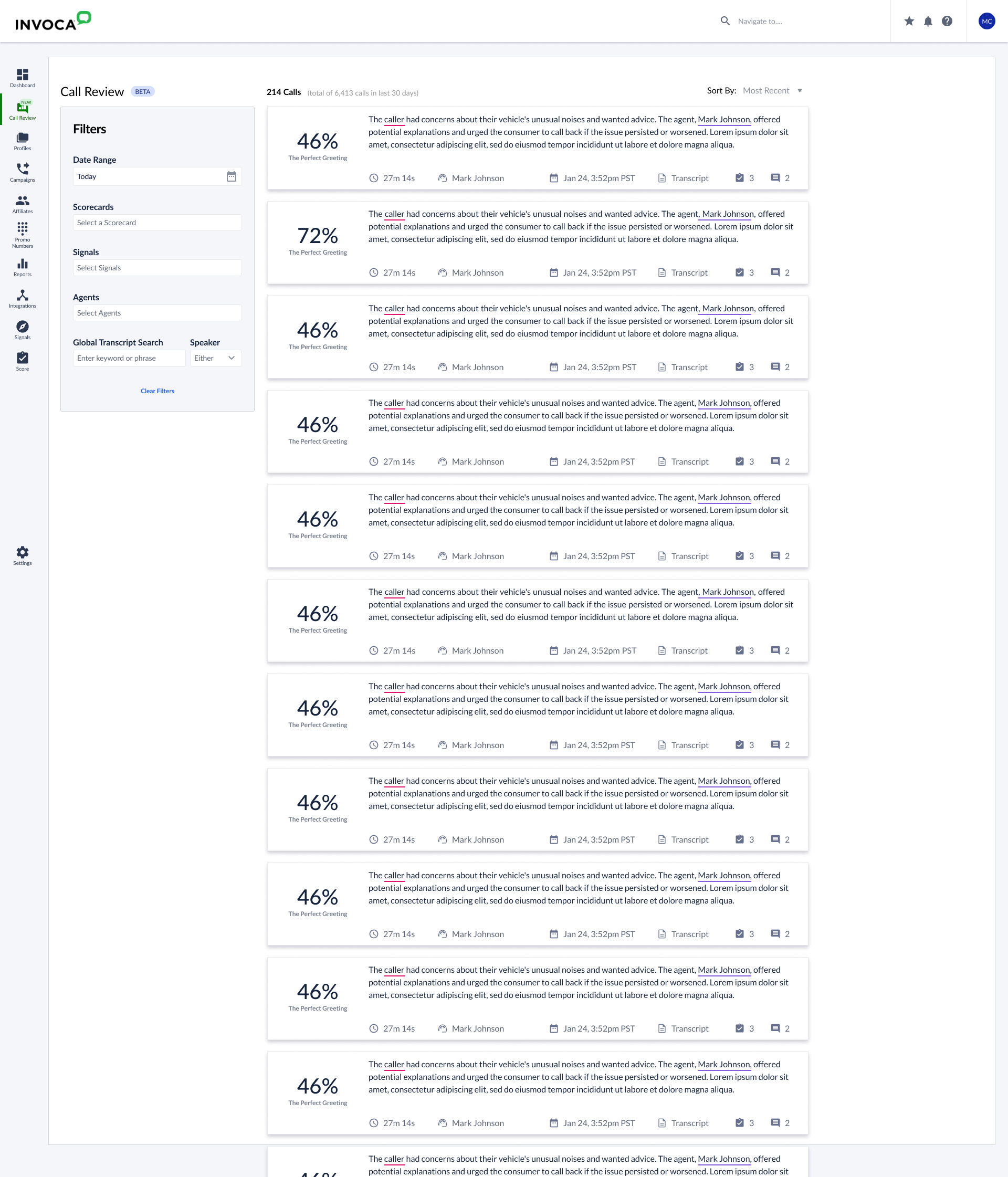

The workflow had two distinct levels. First, find calls of interest within a massive body — for some customers, millions per month. Then, find moments of interest within a specific call — the fumbled pitch, the brilliantly handled objection. The Calls Report was supposed to make both levels efficient. It wasn't.